The actor network is used to interact with the environment.ĭreamer leverages the PlaNet world model, which predicts outcomes based on a sequence of compact model states that are computed from the input images, instead of directly predicting from one image to the next. From predictions of this model, the agent then learns a value network to predict future rewards and an actor network to select actions.

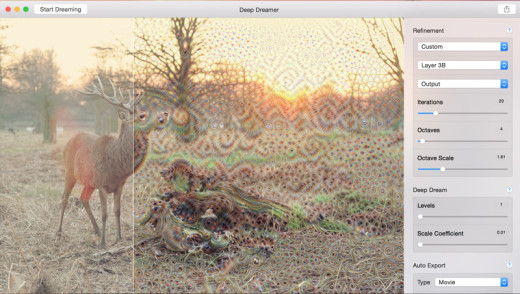

The world model is learned from past experience. The three processes of the Dreamer agent. The three processes, which can be executed in parallel, are repeated until the agent has achieved its goals: To learn behaviors, Dreamer uses a value network to take into account rewards beyond the planning horizon and an actor network to efficiently compute actions. To stimulate further advancement of RL, we are releasing the source code to the research community.ĭreamer consists of three processes that are typical for model-based methods: learning the world model, learning behaviors from predictions made by the world model, and executing its learned behaviors in the environment to collect new experience. Dreamer achieves a new state-of-the-art in performance, data efficiency and computation time on a benchmark of 20 continuous control tasks given raw image inputs. By learning to compute compact model states from raw images, the agent is able to efficiently learn from thousands of predicted sequences in parallel using just one GPU.

Dreamer leverages its world model to efficiently learn behaviors via backpropagation through model predictions. Today, in collaboration with DeepMind, we present Dreamer, an RL agent that learns a world model from images and uses it to learn long-sighted behaviors. While recent research, such as our Deep Planning Network (PlaNet), has pushed these boundaries by learning accurate world models from images, model-based approaches have still been held back by ineffective or computationally expensive planning mechanisms, limiting their ability to solve difficult tasks. In the past, it has been challenging to learn accurate world models and leverage them to learn successful behaviors.

This world model lets the agent predict the outcomes of potential action sequences, allowing it to play through hypothetical scenarios to make informed decisions in new situations, thus reducing the trial and error necessary to achieve goals. In contrast, model-based RL approaches additionally learn a simplified model of the environment. Model-free approaches to RL, which learn to predict successful actions through trial and error, have enabled DeepMind's DQN to play Atari games and AlphaStar to beat world champions at Starcraft II, but require large amounts of environment interaction, limiting their usefulness for real-world scenarios. Research into how artificial agents can choose actions to achieve goals is making rapid progress in large part due to the use of reinforcement learning (RL). Posted by Danijar Hafner, Student Researcher, Google Research

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed